The COVID-19 deluge: Is it time for a new model of data disclosure?

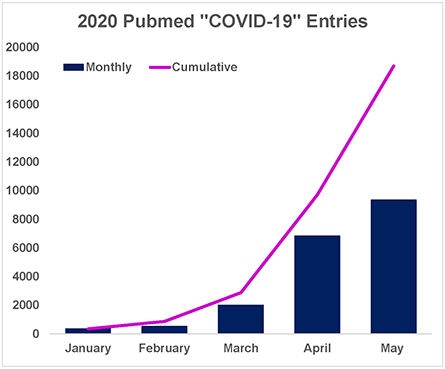

In the first five months of 2020, PubMed indexed 11,580 results for the keyword “COVID-19.” The number of articles in the National Center for Biotechnology Information’s archive increased steadily from 224 in December 2019 to nearly 7,000 in May. This deluge of scientific papers related to the pandemic provides a unique opportunity to review the core assumptions of the modern publication model.

As scientists turn their attention to understanding the novel coronavirus that causes COVID-19, the publishing system has adapted to rapidly disseminate COVID-19–related findings. For example, the first scientific report of a COVID-19 infection in the U.S. was published online in the New England Journal of Medicine on Jan. 31, just one day after the final clinical data were collected. Researchers and public health officials around the country needed these data to prepare for the outbreak; the work was cited more than 1,400 times in the four months after it was posted.

Rapid disclosure of data should not be limited to international health crises. The scientific community can use data only after they are disclosed, so why do months or years elapse between when data are collected and when they are shared? The benefits of reducing this delay are obvious in the case of COVID-19, but the same principle applies to all data.

The path to a scientific literature that rapidly and consistently captures all the data we generate is far from clear. In seeking such a path, I’ve looked at the success of preprinting in accelerating manuscript disclosure.

Rebalancing to make room for data

Uploading manuscripts to preprint servers such as bioRxiv and chemRxiv speeds up the communication of work headed to peer review and publication. Preprinting also reframes the value of manuscripts: By disseminating submission drafts, authors acknowledge that the work therein is worth sharing with the scientific community regardless of peer review outcome. What preprinting accomplishes for manuscript drafts is also possible for stand-alone data.

Successful data-centric efforts within the life sciences include depositories such as the Protein Data Bank and Sequence Read Archive as well as publishing reform efforts such as Wellcome Open Research and the Structural Genomic Consortium’s Open Lab Notebooks. But the idea that data can be collected and reported without a pitch about the data’s implications has not been adopted widely. What would it look like if we shifted to reporting data for its own sake rather than solely in the framework of story-driven manuscripts?

Introducing data disclosure articles

I envision a future when results of experimental work can be preprinted or published separately from traditional journal articles. These new manuscripts would consist of polished data from a single study or related research questions. They could report the results of compound screens, preparation of valuable or challenging reagents, the structural model for a protein, bioinformatics tools, sequencing efforts or any field-specific minimum publishable unit of research work. These data represent additions to a field regardless of whether they motivate future studies or ever are included in traditional journal articles.

Data disclosure articles would not require peer review because they would not include discussion about the implications of the work. If not falsified or manipulated, data have objective value. While removing peer review from scientific publication is controversial, the scientific community can learn something even from poorly executed and communicated experiments; this often occurs regardless of peer review. While the threat of a reviewer’s close inspection may motivate more robust experiments, data articles would not be generated in a vacuum: They also would become pieces of traditional peer-reviewed journal articles. With this model, however, data disclosure does not wait for authors to generate an analysis.

Peer review evaluates whether a traditional journal article’s claims are supported by the data the authors include and cite. In my proposed model, the data can be preprinted and are not under review; rather, when a paper is submitted, the claims based on the data will be reviewed. By separating peer review from data disclosure, readers will see more clearly that the data and the claims based on those data are interacting but independent.

Reporting data in smaller, separate manuscripts has several advantages:

- These reports could appear in real time as larger projects advance. Others in the field could provide feedback on ongoing studies rather than retroactive analysis of work completed over many years.

- Data articles would be free from conjecture and meta-analysis as well as from the bias introduced by a journal’s reputation.

- Lowering the barrier to data disclosure would allow what are now unpublishable projects, such as replication studies or good ideas that didn’t pan out, to reach the broader scientific community.

- Reducing the time to disclosure might motivate pharmaceutical companies to share data that is tangential to their drug-discovery pipelines.

Potential pitfalls

Scientific literature is inundated with over 2 million unique manuscripts per year. Some people might argue that lowering the barrier to data disclosure only will increase this volume and could lead to publication of incomplete or poorly executed work. Without analysis by authors and reviewers, impactful data could be lost in the noise. In highly competitive fields, authors might hesitate to report results before a journal guarantees publication of the related article. To protect against these pitfalls, researchers will need to work toward a collective understanding of the minimum publishable unit and agree that preprinted data articles represent meaningful contributions to the scientific literature. Individual fields may need to develop new tools to curate and index data articles to aid in dissemination. These are challenging barriers, but if authors integrate this new model for data disclosure with the existing publishing mechanisms, their audience will be able both to gain access to data quickly and to appreciate novel findings.First steps

To begin adopting this new model for data disclosure, I suggest authors preprint concise articles with an emphasis on the data presented. This will build momentum toward a publishing environment that encourages data disclosure as a necessary and independent scientific achievement. In this environment, all researchers, and the scientific enterprise, could function more effectively.

Scientists produce two things of separate but equal value: data and interpretation of that data. There is no reason our publication system should emphasize one of these at the cost of the other.

Enjoy reading ASBMB Today?

Become a member to receive the print edition four times a year and the digital edition monthly.

Learn moreGet the latest from ASBMB Today

Enter your email address, and we’ll send you a weekly email with recent articles, interviews and more.

Latest in Opinions

Opinions highlights or most popular articles

Learning can be fun: Gaming anatomy and physiology

Instructors explore how gamification and active learning transform student engagement and retention. They convey how emotion, interaction and design can make even rigorous subjects more effective and memorable.

Mentorship and uncertainty: Lessons from Telemachus

A biochemistry educator reflects on mentorship through the Greek story of Telemachus, showing how embracing uncertainty, failure and curiosity can transform teaching.

Embracing the twists and turns along the educator pathway

A biochemistry educator reflects on the challenges of early faculty life, describing how evidence-based teaching, cross-disciplinary collaboration and classroom challenges shaped her growth.

Redesigning with students in mind

Assistant professor reflects on how the shift to online teaching revealed gaps in points-based grading and led to a redesign centered on transparency and student growth.

Teaching beyond information transfer

Educator reflects on moving beyond lectures to create a biochemistry classroom centered on engagement, transparency and student ownership, showing how small shifts like “student hours” and active learning can transform understanding.

Mayday! Lessons from cellular dysfunction and group work dynamics

An upper-level biology course revealed that strong science doesn’t guarantee strong teamwork. One instructor shares how failed group dynamics reshaped their approach, leading to more structured, collaborative and effective student learning.